Purposes and limitations of IVD tests in the context of a pandemic

Purposes and limitations of IVD tests in the context of a pandemic

Summary

- Since the start of the SARS-CoV2 pandemic (the virus causing Covid-19), RT-PCR, IgM, Antigen test, Sensitivity, False Positive and other in-vitro diagnostic jargon are front and central in the news.

- However, in-vitro diagnostic Key Performance Metrics remain misunderstood by the general public and by the main-stream media.

- More concerning, the limitations of the different categories of tests often seem to be overlooked when Public Health authorities procure tests for mass-testing purposes.

Commentary:

Without adequate understanding of the science behind testing and why false positives and false negatives happen, we might drive the public to further mistrust — and even question the usefulness — of public health and testing.

Knowledge is key to curb this pandemic!

1 - Purposes of testingIn the context of infectious diseases, testing serves four different purposes, actioned at different stages of the infection progression, which require different test characteristics. |

|

-

Screening

“Screening interventions are designed to identify conditions which could at some future point turn into disease, thus enabling earlier intervention and management in the hope to reduce mortality and suffering from a disease”1.

Based on this definition, screening tests are typically carried out in a context of low prevalence e.g. airports.

-

Public Health surveillance

“Public Health surveillance describes the continuous, systematic collection, analysis and interpretation of health-related data needed for the planning, implementation, and evaluation of public health practice."2

In the context of infectious diseases, the purpose of the surveillance is to alert on outbreaks of contagions. This is done via sampling of an at-risk segment of the population, reporting and bioinformatics analysis. Based on this definition, Public Health surveillance tests are also carried out in a context of low prevalence.

-

“Immunity Passport”

“Immunity certificates are a legal document granted by a testing authority following a serology test demonstrating that the bearer has antibodies making them immune to a disease.”

Based on this definition, tests for the purpose of delivering “Immunity Passports” may happen in variable prevalence situations, from low to high.

Note: in the context of the SARS-CoV-2 pandemic, the WHO stated that: “There is currently no evidence that people who have recovered from COVID-19 and have antibodies are protected from a second infection.”3

-

Medical diagnosis of symptomatic patients

The purpose of medical diagnosis is to explain a patient’s symptoms and signs and or confirm their underlying cause e.g. triage of patients with respiratory symptoms in A&Es (ERs).

Based on this definition, screening tests are typically carried in a context of high prevalence.

2 - Definitions of test’s “accuracy”

-

Lab-based (intrinsic) statistics:

The Analytical Performance is primarily defined by its Accuracy which results from the combination of its Sensitivity or PPA (Percent Positive Agreement) and its Specificity or NPA (Percent Negative Agreement)

Condition

(as determined by "Gold standard")Positive Negative Test

outcomePositive True Positive False Positive

(Type II error)→ Positive predictive value Negative False Negative

(Type I error)True Negative → Negative predictive value ↓

Sensitivity↓

Specificity

Table 1: Consolidation of False Positive, True Positive, False Negative, True Negative, PPV and NPV (Wikipedia)

See Appendix 1 for detailed definitions and interpretations, or, if you prefer to hear a short tutorial, here is the nice one from Dr. David Felson, a Professor of Medicine in the Boston University School of Medicine.

-

False Negative: a false negative is an error in which a test result improperly indicates no presence of a condition (the result is negative), when in reality it is present.

-

False Positive: a false positive is an error in data reporting in which a test result improperly indicates presence of a condition, such as a disease (the result is positive), when in reality it is not present.

-

Sensitivity (PPA) measures the false negative rate and is expressed as a percentage:

Out of 100 truly positive patients, how many are detected as positive by the test? The higher the sensitivity, the fewer the numbers of false negatives.

-

Specificity (NPA) measures the false positive rate and is expressed as a percentage:

Out of 100 truly negative patients, how many are detected as negative by the test?

The higher the specificity, the fewer the numbers of false positives. An example here would be if the test detects an antibody response to a different but related coronavirus. The individual would be informed that they have antibodies to covid-19 when in fact it would reflect infection with a different but similar coronavirus.

- Accuracy = (True Positives + True Negatives)/ (All test results)

-

False Negative: a false negative is an error in which a test result improperly indicates no presence of a condition (the result is negative), when in reality it is present.

-

False Positive: a false positive is an error in data reporting in which a test result improperly indicates presence of a condition, such as a disease (the result is positive), when in reality it is not present.

-

Sensitivity (PPA) measures the false negative rate and is expressed as a percentage:

Out of 100 truly positive patients, how many are detected as positive by the test? The higher the sensitivity, the fewer the numbers of false negatives.

-

Specificity (NPA) measures the false positive rate and is expressed as a percentage:

Out of 100 truly negative patients, how many are detected as negative by the test?

The higher the specificity, the fewer the numbers of false positives. An example here would be if the test detects an antibody response to a different but related coronavirus. The individual would be informed that they have antibodies to covid-19 when in fact it would reflect infection with a different but similar coronavirus.

- Accuracy = (True Positives + True Negatives)/ (All test results)

Commentary:

- False positive results on a COVID-19 test can cause undue stress on individuals as they try to navigate their diagnosis, take days off work and isolate from family

- False negative test results are even more dangerous, as people may think it is safe and appropriate for them to engage in social activities, hence contributing to the spread of the virus

Sensitivity and Specificity are therefore Lab-based metrics that presuppose to know whether the patient/sample is positive or negative. Therefore these statistics, although often reported by the media (see Appendix 2 for a review of 3 main-stream media articles), can’t really help us to determine how good a test is at predicting the disease state of a person. In fact, the Sensitivity and the Specificity tells us about what happens if we already know what the right answer should have been.

To drive the point home, think about this: in a population in which no one has the disease, even a test that is terrible at detecting anyone with the disease will appear to work great. It will “correctly” identify most people as not having the disease.

Commentary:

- It is erroneous and misleading to use the above Lab-based intrinsic statistics to estimate the “accuracy” of a test as a diagnostic tool, i.e. to determine whether or not a person carries a disease.

This common mistake is illustrated by the simple numerical example:

Disease prevalence: 1/1,000

Accuracy: 95% (Sensitivity: 100%, Specificity: 95%)

Question:

A person is tested positive. What is the probability that this person has the disease?

a) 100%

b) 95%

c) 5%

d) 2%

(answer in Appendix 3) -

False Negative: a false negative is an error in which a test result improperly indicates no presence of a condition (the result is negative), when in reality it is present.

-

“Real-world” Predictive Statistics:

In real life, by definition, the True Positive or True Negative status of the patient are unknown, the above Lab-based statistics of Sensitivity and Specificity are therefore useless.

-

The probability that a Positive result effectively corresponds to a Positive patient is measured by the Positive Predictive Value (PPV)

Conversely,

-

The probability that a Negative result effectively corresponds to a Negative patient is measured by the Negative Predictive Value (NPV)

-

Positive Predictive Value (PPV):

The Positive Predictive Value is the probability that a subject/sample that returns a positive result is really positive

Or, in other words, the PPV is the proportion of positive results of the test that are true positive results.

-

Negative Predictive Value (NPV):

The Negative Predictive Value is the probability that a subject/sample that returns a negative result is really negative

Or, in other words, the NPV is the proportion of negative results of the test that are true negative results.

The Role of Prevalence:

A critical concept is that the PPV and NPV depend on the prevalence of the disease. The rarer the condition, the more likely a negative test result is truly negative, and the less likely a positive test result is truly positive.

Prevalence, or the proportion of people in a population who have the condition at any one time, increases the PPV but decreases the NPV.

Appendix 1 explains why the PPV provides a meaningful assessment of a test ability to estimate the probability that a positively-tested person has the targeted disease.

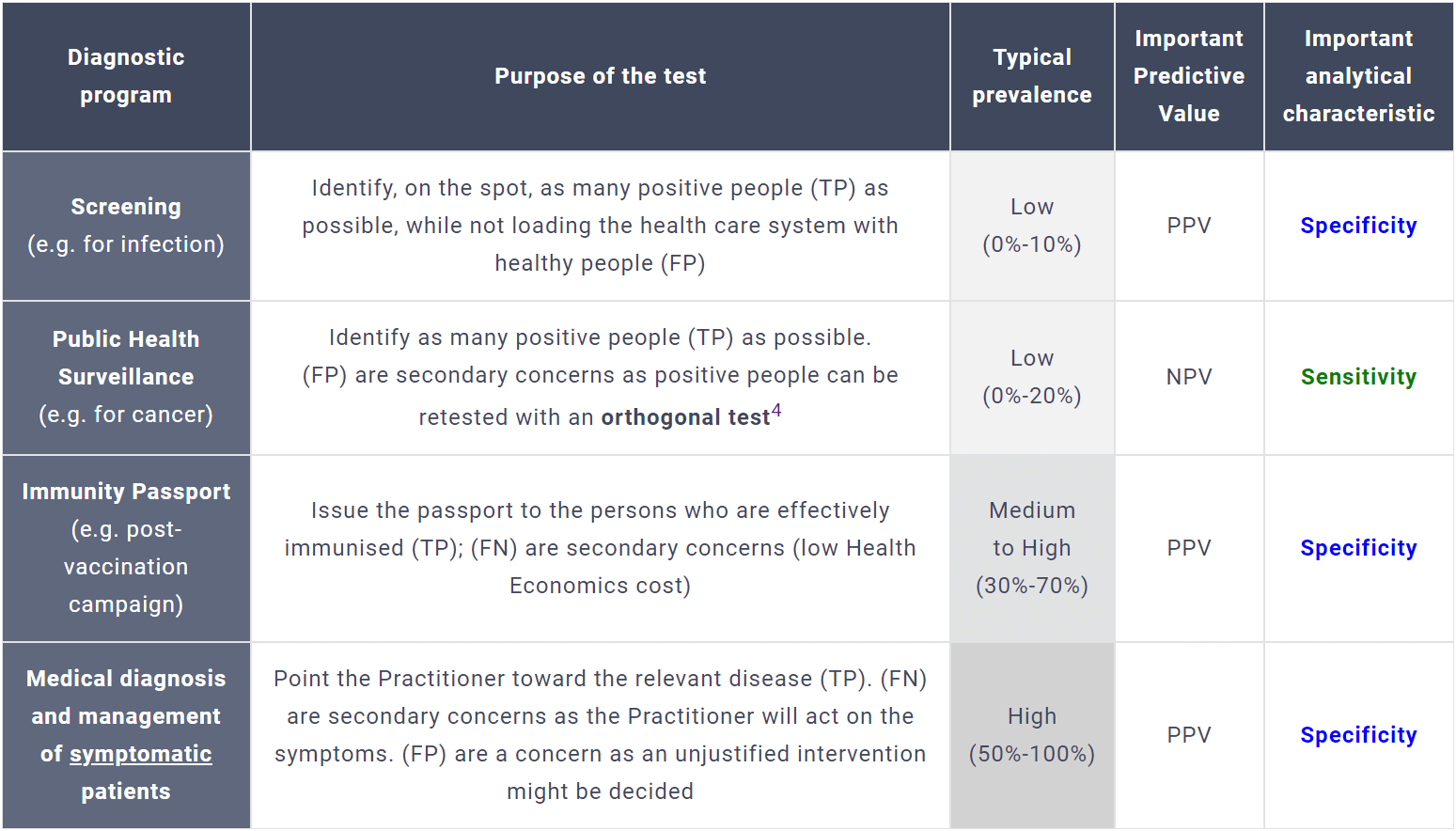

The relevance of NPV and PPV in the above-described diagnostic programs is described in Table 2

Diagnostic program Purpose of the test Typical prevalence Important Predictive Value Important analytical characteristic Screening

(e.g. for infection)Identify, on the spot, as many positive people (TP) as possible, while not loading the health care system with healthy people (FP) Low

(0%-10%)PPV Specificity Public Health Surveillance

(e.g. for cancer)Identify as many positive people (TP) as possible.

(FP) are secondary concerns as positive people can be retested with an orthogonal test4Low

(0%-20%)NPV Sensitivity Immunity Passport

(e.g. post-vaccination campaign)Issue the passport to the persons who are effectively immunised (TP); (FN) are secondary concerns (low Health Economics cost) Medium

to High

(30%-70%)PPV Specificity Medical diagnosis and management of symptomatic patients Point the Practitioner toward the relevant disease (TP). (FN) are secondary concerns as the Practitioner will act on the symptoms. (FP) are a concern as an unjustified intervention might be decided High

(50%-100%)PPV Specificity  Table 2: Key predictive value for various diagnostic programs

Table 2: Key predictive value for various diagnostic programs -

The probability that a Positive result effectively corresponds to a Positive patient is measured by the Positive Predictive Value (PPV)

Commentary:

As the true prevalence is not initially known, and can be dynamic, diagnostic tests always play catch-up with reality and should be deployed in phases (different tests at different prevalence levels)

3 - Clinical relevance:

-

Selecting the right biomarker:

Whatever accurate, a test will be irrelevant and potentially misleading if it is not carefully selected according to the hypothesised disease test and the probable stage of its natural history.

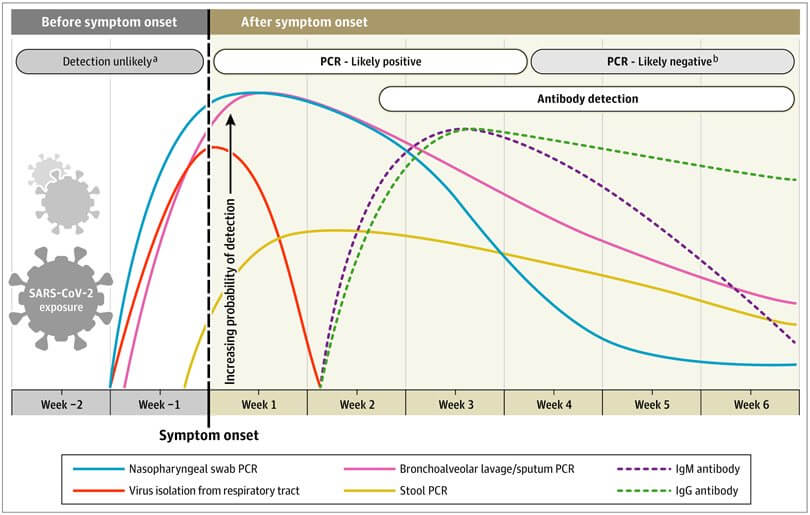

For example, in the context of the SARS-CoV-2 pandemic, serology tests that detect the presence of antibodies, expressed as a response to the infection, tell us one thing: that person has been infected three to seven weeks before the positive test.

Graph #1: Estimated variation over time in diagnostic tests for detection of sars-cov-2 infection Relative to Symptom Onset

While such information may be interesting to estimate the level of acquired immunity in a population “herd immunity”, it is useless as a triage tool. The presence of antibodies doesn’t mean that the patient is either still infected or infectious.

Yet millions and millions of dollars have been wasted to procure such tests resulting not only in a waste of public money but also in misguided actions. -

Understanding the technology of the test:

As much as the map is a representation of the territory, a diagnostic test is a representation of a biological reality. The targeted biomarker, the detection method and the decision algorithm change the topography.

The debate around the number of amplification cycles (Ct value) used in Nucleic Acid Amplification Tests (NAAT) offers and excellent illustration of risk of disconnection between a Positive result and the underlying clinical reality.

In order to reach a detectable level of nucleic acids (DNA or RNA), NAAT tests (PCR, rRT-qPCR, TMA, LAMP, CRISPR...) exponentially multiply, through iterative cycles, the number of copies of the targeted strands.

A 3-point increase in Ct value is roughly equivalent to a 10-fold decrease in the quantity of viral genetic material5. Therefore the higher the Ct, the lower the initial load of DNA or RNA targets.

• Low Ct values (high viral load) more likely indicate acute disease and high infectivity.

• High Ct values (low viral load) can be attributed to several clinical scenarios whereby the risk of infectivity may be reduced but interpretation requires clinical context.

Due to the diversity of primers, enzymes and amplifications methods, Ct values are not directly comparable between assays.

For example, in the US, the largest providers of clinical laboratory services, Labs Quest Diagnostics has set up its Ct threshold at 40 and LabCorp at 38.

Some experts believe the threshold should be even lower:

“If you get a cycle threshold of 35 or more, the chances of it being replication-competent are minuscule… Replication competent’ means particles capable of infecting cells and replicating to produce additional infectious particles.”

Dr. Anthony Fauci Director of the National Institute of Allergy and Infectious Diseases

Whichever the Ct threshold, interpreting single a positive NAAT result, for staging infectious course, prognosis, infectivity or as an indicator of recovery must be done with context about the clinical history.

Appendix 1: PPV & NPV definitions and formulas

-

Negative Predictive Value (NPV):

The negative predictive value is the proportion of negative results of the test that are true negative results.

NPV =number of true negatives number of true negatives + number of false negatives

NPV =specificity x (1-prevalence) (1-sensitivity) x prevalence + specificity x (1-prevalence)

-

Positive Predictive Value (PPV):

The positive predictive value is the proportion of positive results of the test that are true positive results.

PPV =number of true positives number of true positives + number of false positives

PPV =sensitivity x prevalence sensitivity x prevalence + (1-specificity) x (1-prevalence)

As expressed in the formulas, both the NPV and the PPV are not intrinsic characteristics of the test analytical performance; they also depend on the prevalence of the disease.

- the positive post-test probability (the probability of having the target condition if the test falls out positive), is numerically equal to the PPV

- the negative post-test probability (the probability of not having the target condition if the test falls out negative) is numerically complementary to the negative predictive value ([negative post-test probability] = 1 - [NPV]),

The respective curves of negative post-test probability and positive post-test probability (PPV) as a function of the prevalence are shown in graph 2.

Interpretation:

For a given expected prevalence of the disease (Pre-test probability on the horizontal axis), the vertical intersection with the lower elliptic curve gives horizontally the negative post-test probability.

Respectively, the vertical intersection with the upper elliptic curve gives horizontally the PPV.

It results from the above curves that the PPV provides a more meaningful assessment a test ability to estimate the probability that a person has the targeted disease.

Orthogonal tests:

Two complementary that react two different biomarkers of the same disease. Consecutively using orthogonal tests results in a significant improvement of the specificity and therefore of the PPV.

https://en.wikipedia.org/wiki/False_positives_and_false_negatives#true_positive

https://en.wikipedia.org/wiki/Pre-_and_post-test_probability

Appendix 2: Review of media article by www.healthnewsreview.org

-

“This Guardian story touted an experimental blood test that was said not only to “detect autism in children” but also “could lead to earlier diagnosis.”

Our reviewers noted that the story, based on a study of just 38 children, offered no data to back up its claim; nor did it warn of the harm that a false-positive

or false-negative result could inflict on children and their parents.”

https://www.healthnewsreview.org/review/guardian-trumpets-test-diagnose-autism-wheres-evidence

-

“Similar concerns were raised about this USA Today story about a genetic test for breast cancer. Reviewers said the story “doesn’t offer much information that readers

can use to make decisions about the use of the recently-approved 23andMe test. For example, what is the rate of false-positive results? Or false negatives?

What does that mean for actual risk of developing breast cancer?”

https://www.healthnewsreview.org/review/usa-today-rehashes-pr-talking-points-in-story-on-at-home-breast-cancer-gene-test

-

“A New York Presbyterian Hospital news release touted a test that “detects prostate cancer with 92 percent accuracy.”

But as pointed out on our blog, the 92% figure represents the sensitivity of the test – not the accuracy – which is a very different concept.

https://www.healthnewsreview.org/2015/06/more-accurate-than-traditional-biopsies-and-other-misinformation-about-a-new-prostate-cancer-test-from-ny-presbyterian-hospital/

Appendix 3: Quizz

Numerical example:

Disease prevalence: 1/1,000

Accuracy: 95%

Sensitivity: 100%

Specificity: 95%

Question:

A person is tested positive. What is the probability that this person has the disease?

(a) 100%

(b) 95%

(c) 5%

(d) 2%

Answer: 2%

Let’s think of the implied statistics, based on a population of 1,000 persons:

| Population = 1,000 | Disease | ||

| + | - | ||

| Test | + | TP: 1 | FP: 50 |

| - | FN: 0 | TN: 949 | |

NPV =

PPV =

1 https://apps.who.int/iris/bitstream/handle/10665/37650/WHO_PHP_34.pdf?sequence=17

2 https://www.who.int/emergencies/surveillance

3 https://www.who.int/news-room/commentaries/detail/immunity-passports-in-the-context-of-covid-19

4 An orthogonal test is a second test that targets a different biomarker that is relevant to the hypothesised disease. More details in: Evaluation of Orthogonal Testing Algorithm for Detection of SARS-CoV-2 IgG Antibodies; Clinical Chemistry 66:12 Infectious Disease 1531–1537 (2020)

5 Understanding cycle threshold (Ct) in SARS-CoV-2 RT-PCR: a guide for health protection teams; Ref: PHE publications gateway number: GW-1651